Tackling the Disinformation Ecosystem: System-Based Insights from Southeast Asia

With around 64.3 percent of its 680 million people active on social media, Southeast Asia has evolved into a highly fertile environment for influence operations that erode democratic quality and strain social cohesion. As these operations grow more sophisticated and increasingly enlist ordinary citizens motivated by economic incentives and ideological commitments, fragmented responses prove inadequate. Instead, a system-oriented approach would address not only platform algorithms but also the political, economic, and identity-based interests that sustain and drive influence campaigns.

Introduction

Influence operations (IOs) are large-scale, covert efforts by state and non-state actors to shape public opinion. These actors strategically deploy not only falsehoods but also so-called curated facts, which closely overlap with disinformation and digital propaganda. Anonymous actors and identifiable accounts rely on specific techniques and exploit social media infrastructure to maximize reach and virality.

In Southeast Asia, coordinated influence has increasingly functioned as a form of brokerage during elections and a tool of autocratic governance. Existing research highlights the inauthentic behavior that characterizes IOs, including identity concealment, automated user interactions, and coordinated posting to intensify selected narratives. While this sort of inauthenticity still characterizes some IOs in Southeast Asia, new research highlights the advent of IOs coordinated by ordinary people and domestic stakeholders with transparent goals and identities. These IOs complicate the increasingly sophisticated system of information disorder, or the pollution of the information ecosystem with misinformation. However, analysis of the research demonstrates that a multi-pronged, system-based approach effectively combats changing IOs.

Shifting Dynamics

Conducted through the RAIDAR (Researching Asian Information Disorder and Responses) project at Thailand’s Chulalongkorn University, an initiative supported by Canada’s International Development Research Center, and in collaboration with researchers from Malaysia, Indonesia, Thailand, and the Philippines, a 2024-2025 study of influence operations in Southeast Asia proves the continuation of current misinformation patterns. At the same time, three major pattern changes clarify how these operations function.

First, the study shows that the growing role of non-state actors, including ordinary users, influencers, and private firms, overshadows state-directed IO efforts. A striking case is the surge of anti-Rohingya sentiment in Indonesia between 2023 and early 2024. Before the 2024 national election, coordinated influence campaigns spread online rumors claiming that Rohingya refugees in the Aceh province were exploiting local communities by discarding donated food and stealing from villagers. Electoral candidates who expressed support for the Rohingya were also targeted. These narratives, which escalated tensions, culminated in a mob attack on a refugee shelter in Aceh in December 2023, which in turn prompted international condemnation of mobilized hate speech.

At first glance, these posts resemble typical coordinated campaigns with synchronized timing and similarly crafted messages. However, interviews with “buzzers,” the Indonesian colloquial name for influence workers, behind these campaigns revealed a complex and troubling picture. These workers, including homemakers, single mothers, and volunteers for political parties, were not solely motivated by payment or, in the case of party volunteers, by prospects of promotion within the party. Instead, they articulated a feeling of moral obligation and framed their participation in xenophobic messaging as a necessary act of defending the homeland from perceived foreign threats. When ordinary users drive IO campaigns with emotionally charged, inconsistently true content, they challenge social media platform models that detect and suspend accounts associated with Coordinated Inauthentic Behavior (CIB).

Second, the same platform algorithms that personalize and recommend commercial content also cultivate bottom-up IOs driven by political fans, reinforcing political personalism, or the concentration of authority around individual leaders, and subsequently reshaping party politics. In Thailand, this form of “political fandom” gained visibility during the 2023 general election. Following the youth-led pro-democracy protests in 2020-2021 and growing public dissatisfaction with the pro-establishment, military-backed government, the opposition Move Forward Party’s prime ministerial candidate, Pita Limcharoenrat, drew a substantial youth audience by positioning himself as the bulwark of good governance and democracy in the lead-up to the 2023 election. By May 2023, his fandom intensified largely through campaigns on X and TikTok, where creators compared him to a Korean pop star. Supporters exploited platform algorithms to synchronize messaging and boost visibility. Meanwhile, according to the project’s Thai researcher, supporters of pro-establishment candidates spread manipulated images and misleading information regarding Pita and his party through fan pages on chat applications such as LINE and Facebook.

In the Philippines, a similar pattern of fandom persists. Throughout the 2016 and 2022 election cycles and former President Duterte’s presidency (2016-2022), Duterte’s support stemmed from his curated image as a strongman. His rhetoric emphasized order before law, which he used to justify his brutal war on drugs, a crackdown that resulted in the deaths of at least 30,000 individuals, and repressive measures against civil society critics. While Duterte relied on political influencersthroughout his presidential campaigns and administration, it was his domestic and overseas Filipino “die-hard fans” that consistently defended his hardline policies amid intense international criticism of his war on drugs. His successor and political rival, Ferdinand Marcos Jr., has adopted similar strategies and further entrenched the trend of personalist politics through algorithmically-fueled influence networks.

Collectively, ordinary users—the fans—adopt typical IO practices to mobilize support for their political “idols.” Political fandom’s growing prominence across the region questions the relevance of policy platforms in modern election campaigns and whether party politics risk becoming increasingly deinstitutionalized in favor of charismatic figureheads—a persistent trend globally and increasingly normalized in Southeast Asia.

Third, while traditional influence campaigns have long flourished on text-based platforms like Facebook or X, they struggle to gain similar traction on newer platforms such as TikTok, whose visual formats and algorithmic designs prioritize creative, self-produced content and a sense of authenticity. Malaysia illustrates this challenge well. Traditional cybertroop operations, which have often been linked to ruling parties in Malaysia, have had difficulty adapting their copy-and-paste messaging to TikTok’s algorithmic environment. As the survey’s Malaysian researcher notes, TikTok’s “For You” feed and livestream features promote seemingly genuine, amateur-style presentations, complicating anonymous cybertroops’ attempts to sustain meaningful engagement. As a result, social media personalities with distinct styles and recognizable content-making approaches thrive on TikTok. These platforms force the operative mode of IOs to shift from automated, faceless accounts to influencer-driven strategies rooted in personality, visibility, and brand-building. The rise of such networks therefore reinforces broader trends discussed earlier such as the growing prominence of ordinary users as influence actors and the growing relevance of political “idols” and fandom-like support bases in shaping contemporary political communication.

Impacts and Responses

Building on the analysis of shifting IO patterns, the RAIDAR project conducted an expert survey in 2025 using purposive sampling of one hundred respondents that RAIDAR selected for their high level of expertise on disinformation in Southeast Asia. Using standard statistical methods, the questionnaire assessed how three key algorithmic tactics used in IO campaigns affected electoral processes, information credibility, and social cohesion. The tactics were coordinated “astroturfing” (or cheerleading) by IO workers and political fans, organized harassment (or trolling), and microtargeted advertising. The results are telling: the study found that all three tactics broadly harmed the quality of public discourse. Survey members perceived harassment as most severely damaging to targeted individuals, while they viewed microtargeted advertising as particularly harmful to electoral processes.

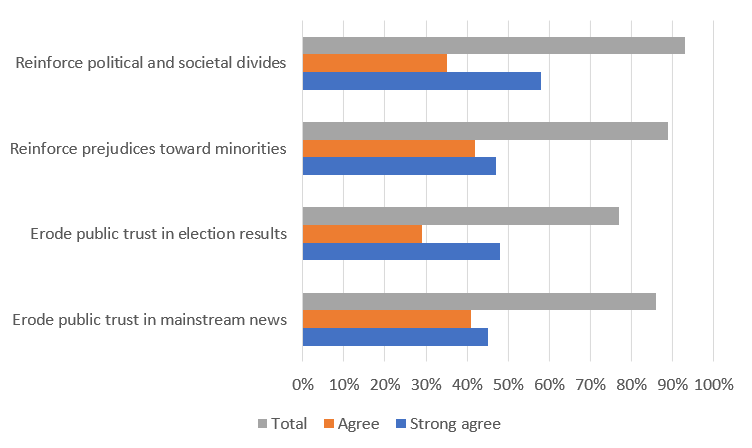

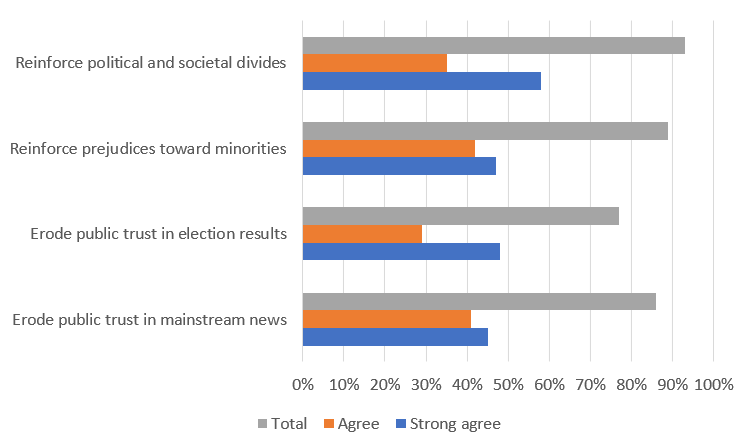

More specifically, over 80 percent of respondents responded “strongly agree” and “agree” to the claim that manipulated content reinforced political and social divides, fueled prejudice against minorities, and eroded trust in both election results and traditional media (see Figure 1 below). A large majority (49 percent “strongly agree” and 43 percent “agree”) also viewed financial incentives for viral content as a key driver of extreme viewpoints. Interestingly, most respondents (51 percent “strongly agree” and 37 percent “agree”) saw the lack of oversight on social media platforms to be most damaging. However, nearly 90 percent of respondents (56 percent “strongly agree” and 33 percent “agree”) remained wary of government-led regulation of disinformation, noting the risk of anti-democratic actors weaponizing such measures to restrict political freedoms and suppress open debate.

Figure 1: Survey responses on the impact of content manipulation on political and social divide, prejudices toward minorities, trust in election results, and confidence in mainstream media.

Source: Elaboration from survey analysis by Stephen “Tyler” Williams, the project’s quantitative research consultant

The research findings ultimately reinforce the emerging “system-based” approach to tackling the disinformation ecosystem. Disinformation is not merely a matter of falsehoods that can be corrected through content verification. As seen across Southeast Asia, a wide array of actors, evolving from anonymous, automated accounts to highly visible influencers and political “fans,” sustain influence campaigns alongside their economic, ideological, and political incentives—a process exacerbated by the revenue-driven algorithmic designs of major platforms.

Policy Recommendations

Addressing this ecosystem thus requires coordinated engagement from multiple stakeholders, including governments, intergovernmental organizations, civil society actors, and the platforms themselves. Above all, this multi-pronged framework should tackle both the political utility of coordinated disinformation during electoral cycles and the socio-economic incentives that draw ordinary users into such campaigns. These motivations align closely with platform business models and algorithmic architectures that monetize viral and emotionally charged content, thereby fostering the infrastructure of IOs.

At the core of this framework is the need to demonetize and disincentivize malign IOs by engaging with “watchdog” actors and addressing socioeconomic drivers of the disinformation industry. During elections, national election commissions and civil society-based election observation groups should closely monitor political advertising purchased by parties and affiliated influencers. Sustained research on evolving influence tactics, both during and beyond electoral cycles, should reinforce this oversight.

In the long term, regional organizations such as the Association of Southeast Asian Nations (ASEAN) should leverage platforms’ reliance on Southeast Asia’s sizable markets to push for greater transparency in algorithmic design and content governance that prioritizes society before profit. Beyond platform governance, policymakers must also address structural conditions, such as economic precarity, mistrust in traditional media and political institutions, and widening social divides, that make citizens susceptible to participation in influence campaigns. Any regulatory response should avoid excessive restrictions and include democratic safeguards, ensuring accountability, redress, and multistakeholder participation. Ultimately, reducing the incentives for malign IOs must go hand in hand with strengthening a digital ecosystem that supports accurate, trustworthy, and diverse information.

. . .

Janjira Sombatpoonsiri is an Assistant Professor at the Institute for Asian Studies at Chulalongkorn University and a Research Fellow at the German Institute for Global and Area Studies (GIGA). She currently leads three major research projects on digital authoritarianism in Asia, influence operations and conflict narratives in Southeast Asia, and democratic resilience across the region. Her forthcoming book, Death by a Thousand Cuts: Digital Repression and Democracy in Thailand, will be published by the University of Wisconsin Press. She is also a member of the Carnegie Endowment for International Peace’s Digital Democracy Network and a regional manager for the Digital Society Project.

Image Credit: Rameshe999, CC BY-SA 4.0, via Wikimedia Commons