PART I: Killer Robots: A Third Revolution in Warfare?

The below article is the first installment in a two-part series. Artificial Intelligence can benefit humanity or it can be married with weapons to create fully autonomous killing machines. Are people prepared for this third revolution in warfare? What are the pros and cons of such weapons and should they be banned from the battlefield?

Overview

Gunpowder first revolutionized warfare, followed by a second far more destructive revolution: nuclear weapons. The nine countries possessing nuclear weapons hold the fate of the world—nearly eight billion lives—in their hands. And now, the marriage of weapons of war and artificial intelligence (AI) into fully autonomous weapons systems, or killer robots, further threaten the world’s future in incalculable ways.

To most, such weapons science fiction. But for those who contribute to their ongoing development, production, and testing, whether it be academics and universities, computer scientists and roboticists, or the weapons industry and the militaries that fund them, killer robots are very real. In a five-year period ending in 2023, the Pentagon’s Defense Advanced Research Project Agency (DARPA) aims to spend billion on AI. Organized movements and coalitions must confront the delegation of life and death decisions made by algorithmic-driven weapons.

One such coalition, the Campaign to Stop Killer Robots, was launched in April 2013. The campaign does not oppose AI and autonomy on principle. Rather, it believes that death by autonomous machines is not only morally and ethically bankrupt, but also that the use of such weapons would violate human rights and humanitarian law—the laws of war. Building on the work of other humanitarian disarmament campaigns that led to the 1997 Mine Ban Treaty, the 2008 Cluster Munition Convention, and the 2017 Treaty on the Prohibition of Nuclear Weapons, the Campaign to Stop Killer Robots seeks a new treaty requiring that human beings be meaningfully and directly involved in all target and kill decisions. Such a treaty would effectively “ban” fully autonomous weapons.

Within months of the campaign’s launch, governments responded by opening discussions on autonomous weapons in 2013 at the United Nations in Geneva during meetings of the Convention on Conventional Weapons (CCW). Negotiated at the end of the Vietnam War, the CCW entered into force on December 2, 1983, and bans or restricts the use of weapons “considered to cause unnecessary or unjustifiable suffering to combatants or to affect civilians indiscriminately.” The various protocols attached to the Convention deal with different weapons and the addition of new protocols—which could include a new protocol on autonomous weapons. Unfortunately, more than seven years later, the CCW meetings have done little more than tread water as research and development on weaponized AI continues at a dizzying pace.

Killer Robots: Pros, Cons, and Why They Should be Prohibited

Often when most people hear “autonomous weapons” or “killer robots,” they assume the terms are synonyms for drones. This is not the case, but using drones as a point of departure makes it relatively easy to make the leap to grasp the concept of fully autonomous weapons. The simplest of these weapons systems would resemble drones with no human pilots or human control once launched.

With the semi-autonomous drones in use now, human pilots can safely base themselves thousands of miles from their targets. However, while drones can fly thousands of miles on their own, human pilots must still identify their targets and make the so-called “kill decision.” The drone is still a weapon in the hands of a human being who ultimately decides whether or not to use its missiles to attack other human beings.

Autonomous weapons systems would range from a “human-free drone” to complex swarms of weapons operating independently of humans once launched. On December 15, 2020, the US Air Force carried out its first test of such weapons as part of its Golden Horde program. “The ultimate aim of this effort is to develop artificial intelligence-driven systems that could allow the networking together of various types of precision munitions into an autonomous swarm.”

Additionally, in early 2020, the Pentagon’s Joint Artificial Intelligence Center began work on a project that “would be its first AI project directly connected to killing people on the battlefield.” The plan is to begin using it in combat this year.

Therein lies the problem. In addition to crossing the moral and ethical rubicon of deciding to create and use autonomous weapons that can attack and kill people on their own, such weapons would violate provisions of the laws of war as well as human rights.

Pros

Proponents extol what they see as autonomous weapons’ many potential virtues. Countries with autonomous weapons would in theory have strategic and tactical advantages over those that do not. Robotic weapons would also act as a force multiplier, reducing or eliminating altogether human involvement in some missions, which would reduce the number of military casualties.

Autonomous weapons would be able to receive, process, and react to information much more quickly than any human being. Defensive weapons that are largely autonomous, such as the US Phalanx and the Israeli Iron Dome, can already intercept incoming rockets and missiles. Autonomous weapons would be able to operate in areas inaccessible to human soldiers and, thus, expand battlefields. The machines would not be subject to the issues and emotions exclusive to humans that can negatively impact soldiers in battle such as exhaustion, fear, or boredom. Replacing soldiers with machines could make going to war a less emotionally exhausting experience.

. . .

Jody Williams received the Nobel Peace Prize in 1997 for her work to ban antipersonnel landmines, which she shared with the International Campaign to Ban Landmines. She is a co-founder of the Campaign to Stop Killer Robots. Williams also chairs the Nobel Women’s Initiative, which brings together five women Nobel Peace Prize recipients to support women’s organizations working in conflict zones to bring about sustainable peace with justice and equality.

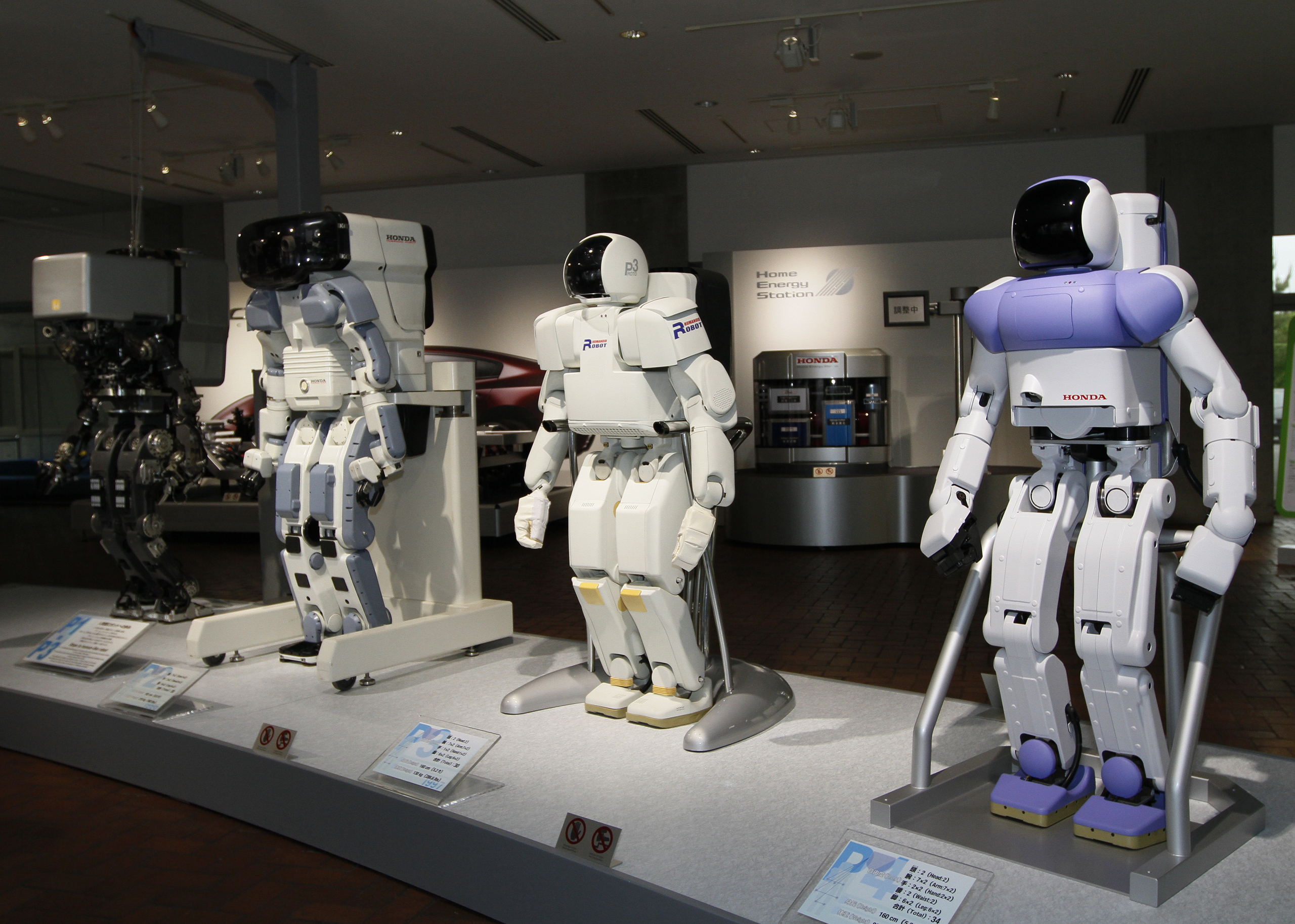

Image Credit: Morio (via Creative Commons)